Rolling Research: Faster Decisions, Better Ad Concepts

Role: Lead UX researcher | Client: Major technology platform | Methods: Moderated interviews, iterative prototype concept testing | Timeline: Rolling 4-week cycles across multiple product sub-teams

Overview

A major technology platform needed fast, reliable user insight to make product decisions across new advertising formats. Key questions included: which concepts are worth moving forward and how can we design ads that feel relevant and useful rather than intrusive?

I built and ran a rolling research program that gave teams evidence-backed answers on a 4-week cadence, creating a repeatable research engine that compressed iteration cycles, reduced risk, and helped the team move winning concepts to market faster.

As the program matured, I identified recurring patterns across verticals and proactively surfaced those insights to incoming teams, building a cross-vertical knowledge layer into the program's foundation.

Project Challenges

High ad-aversion. Users’ hatred for ads was palpable. Breaking through surface negativity meant structuring probing to uncover behavioral cues and value drivers.

New vertical every 3-4 weeks. Each cycle brought a new product area and 3–6 prototypes, meaning rapid onboarding, and building a template bank were crucial.

Inconsistent readiness. Vertical teams varied in preparation. I worked with leadership on both sides and helped establish alignment on what teams needed to make each cycle effective.

Research Approach & Methods

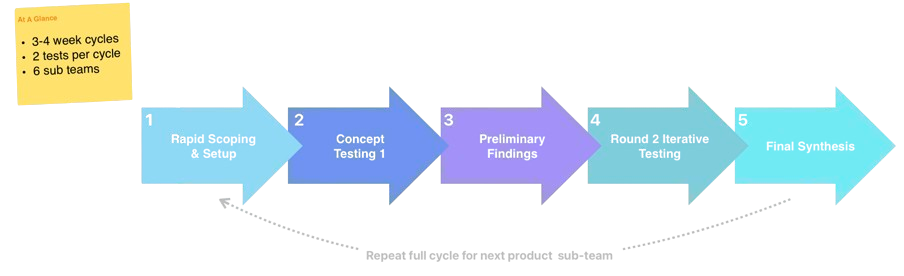

Rather than a single study, this program functioned as a repeatable research system, purpose-built for fast-moving teams.

Phase 1 - Rapid Scoping & Setup

Aligned with each sub-team on goals, decision points, and prototype variations.

Created stimulus decks, recruited participants, and quickly ramped up on 3–6 evolving concepts per cycle.

Phase 2 - Concept Testing Round 1

Moderated sessions to evaluate clarity, trust, and relevance, separating ad-aversion from actionable product insight.

Phase 3 - Preliminary Findings

Delivered a short midpoint readout to build early alignment, clarify what to refine, and sharpen hypotheses for Round 2.

Phase 4 - Round 2 Iterative Testing

Tested updated concepts and identified which refinements meaningfully improved user experience.

Phase 5 - Final Synthesis

Consolidated insights across rounds to guide what to refine, pause, or rethink before transitioning to the next sub-team’s cycle.

Key Themes

1. Credibility and relevance are the path through ad-aversion

Users' resistance to ads was immediate and consistent. But, when ads surfaced the right information (specialization, reviews, images, known credibility markers), users shifted from avoidance to engagement. The barrier wasn't ads themselves, it was ads that felt generic or untrustworthy. Designing for relevance and credibility aligned user expectations with monetization needs and created a clear path toward ads that felt useful rather than intrusive.

2. Cross-vertical patterns created an ads research foundation

Across verticals, the same user behaviors kept surfacing, consistent information needs, credibility signals, and interaction patterns that influenced trust and comprehension regardless of vertical. Proactively connecting these patterns across cycles, surfacing prior findings with incoming teams, and building references in new reports created early momentum toward a more unified research foundation where insights can compound across cycles and teams build on each other's learning instead of starting from scratch.

3. Iteration pace shaped insight quality

The rapid cadence revealed which refinements meaningfully improved comprehension and trust, while deprioritizing changes that added complexity without user value. New ad formats were interpreted through the structure of existing experiences, especially on business pages and service listings.

What Made This Research Operationally Effective

This program worked because the system behind it was intentionally lightweight, repeatable, and built for speed, using:

Reusable frameworks. Consistent frameworks and connecting findings across teams compounded insight across the program.

Early consensus-building. Preliminary findings briefs helped teams refine prototypes quickly and avoid multi-week misalignment.

Flexible, high-velocity collaboration. Tight alignment with designers allowed for rapid prototype updates between rounds.

Signal-over-noise. Separating ad-aversion from meaningful product insight ensured decisions were rooted in user behavior, not gut reactions.

Impact

1. Cross-Vertical Knowledge Building

Identifying and surfacing insights that translated across verticals helped the program act as a evolving knowledge base instead of a series of isolated studies, giving the broader ads team a foundation for longer-term strategic decision-making.

2. Accelerated Decisions and More User-Aligned Ad Concepts

The 3–4 week cadence gave teams fast, evidence-backed direction on what to refine, what to pause, and what to explore next, shortening iteration cycles and reducing guesswork and risk. Findings guided improvements to credibility markers, layout and hierarchy, and where and how new ad formats appeared in familiar surfaces, shifting concepts from "bothersome" toward "potentially useful.

3. A Repeatable Research Engine for Multiple Teams

The program established a lightweight, scalable structure that made it easy for new sub-teams to onboard into a ready-made research workflow.

Teams gained earlier testing, clearer decision points, improved cross-functional alignment, and faster iteration windows.

Reflection

This program strengthened my ability to operate at the intersection of research, operations, and agile product development. Switching verticals every few weeks also sharpened my skills in rapid onboarding, high-velocity synthesis, and facilitating consensus in tight timelines.

Last, being embedded into a rolling research program underscored the value of continuous evaluative research, especially in spaces where trust, clarity, and perceived usefulness shape whether an emerging ad experience succeeds or fails.